Insights from recent episode analysis

Audience Interest

Podcast Focus

Publishing Consistency

Platform Reach

Insights are generated by CastFox AI using publicly available data, episode content, and proprietary models.

Most discussed topics

Brands & references

Total monthly reach

Estimated from 5 chart positions in 5 markets.

By chart position

- 🇦🇺AU · Technology#1265K to 30K

- 🇸🇬SG · Technology#3210K to 30K

- 🇳🇿NZ · Technology#108500 to 3K

- 🇭🇰HK · Technology#157500 to 3K

- 🇦🇷AR · Technology#186500 to 3K

- Per-Episode Audience

Est. listeners per new episode within ~30 days

5.0K to 21K🎙 Daily cadence·145 episodes·Last published 1w ago - Monthly Reach

Unique listeners across all episodes (30 days)

17K to 69K🇦🇺43%🇸🇬43%🇳🇿4%+2 more - Active Followers

Loyal subscribers who consistently listen

6.6K to 28K

Market Insights

Platform Distribution

Reach across major podcast platforms, updated hourly

Total Followers

—

Total Plays

—

Total Reviews

—

* Data sourced directly from platform APIs and aggregated hourly across all major podcast directories.

On the show

From 12 epsHost

Recent guests

Recent episodes

Notes from inside China's AI labs

May 7, 2026

16m 35s

The distillation panic

May 4, 2026

8m 52s

My bets on open models, mid-2026

Apr 15, 2026

6m 57s

The inevitable need for an open model consortium

Apr 11, 2026

5m 45s

Claude Mythos and misguided open-weight fearmongering

Apr 9, 2026

8m 36s

Social Links & Contact

Official channels & resources

Official Website

Login

RSS Feed

Login

| Date | Episode | Topics | Guests | Brands | Places | Keywords | Sponsor | Length | |

|---|---|---|---|---|---|---|---|---|---|

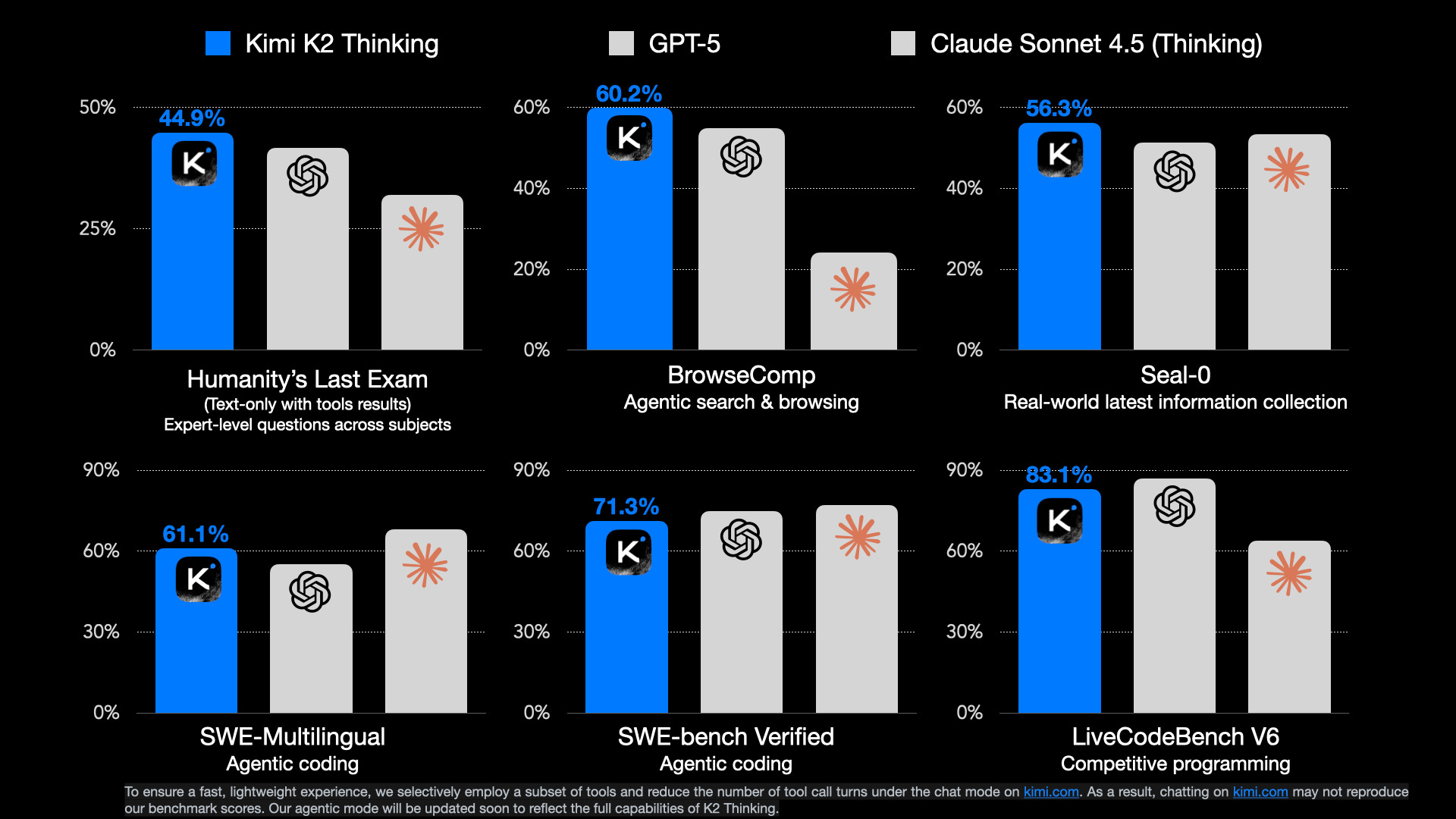

| 5/7/26 |  Notes from inside China's AI labs✨ | AI researchChinese technology+3 | — | Interconnects AI | ChinaHangzhou+1 | AIChina+5 | — | 16m 35s | |

| 5/4/26 |  The distillation panic✨ | distillation attacksAI capabilities+3 | — | AnthropicChinese labs | ChinaU.S. | distillation attacksAI+5 | — | 8m 52s | |

| 4/15/26 |  My bets on open models, mid-2026✨ | open modelsAI capabilities+3 | — | — | — | open modelsclosed labs+3 | — | 6m 57s | |

| 4/11/26 |  The inevitable need for an open model consortium✨ | open modelsAI consortium+4 | Percy Liang | StanfordNemotron+7 | — | open modelsAI consortium+6 | — | 5m 45s | |

| 4/9/26 |  Claude Mythos and misguided open-weight fearmongering✨ | AI modelscybersecurity+3 | — | Claude MythosOpenAI | — | Claude Mythosopen-weight models+5 | — | 8m 36s | |

| 4/3/26 |  Gemma 4 and what makes an open model succeed✨ | open modelsAI development+4 | — | Gemma 4Llama 3+16 | — | open modelsAI+6 | — | 8m 55s | |

| 3/22/26 |  Lossy self-improvement✨ | AI developmentrecursive self-improvement+4 | — | AIAI industry+5 | — | AIrecursive self-improvement+5 | — | 13m 23s | |

| 3/18/26 |  GPT 5.4 is a big step for Codex✨ | AImodel review+3 | — | GPT 5.4Codex+1 | — | GPT 5.4Codex+4 | — | 6m 49s | |

| 3/16/26 |  What comes next with open models✨ | open modelsAI ecosystem+3 | — | LlamaDeepSeek | — | open modelsAI+5 | — | 18m 08s | |

| 3/6/26 |  Dean Ball on open models and government control✨ | open modelsgovernment control+3 | Dean W. Ball | AnthropicDepartment of War+2 | — | open modelsAI+5 | — | 35m 36s | |

Want analysis for the episodes below?Free for Pro Submit a request, we'll have your selected episodes analyzed within an hour. Free, at no cost to you, for Pro users. | |||||||||

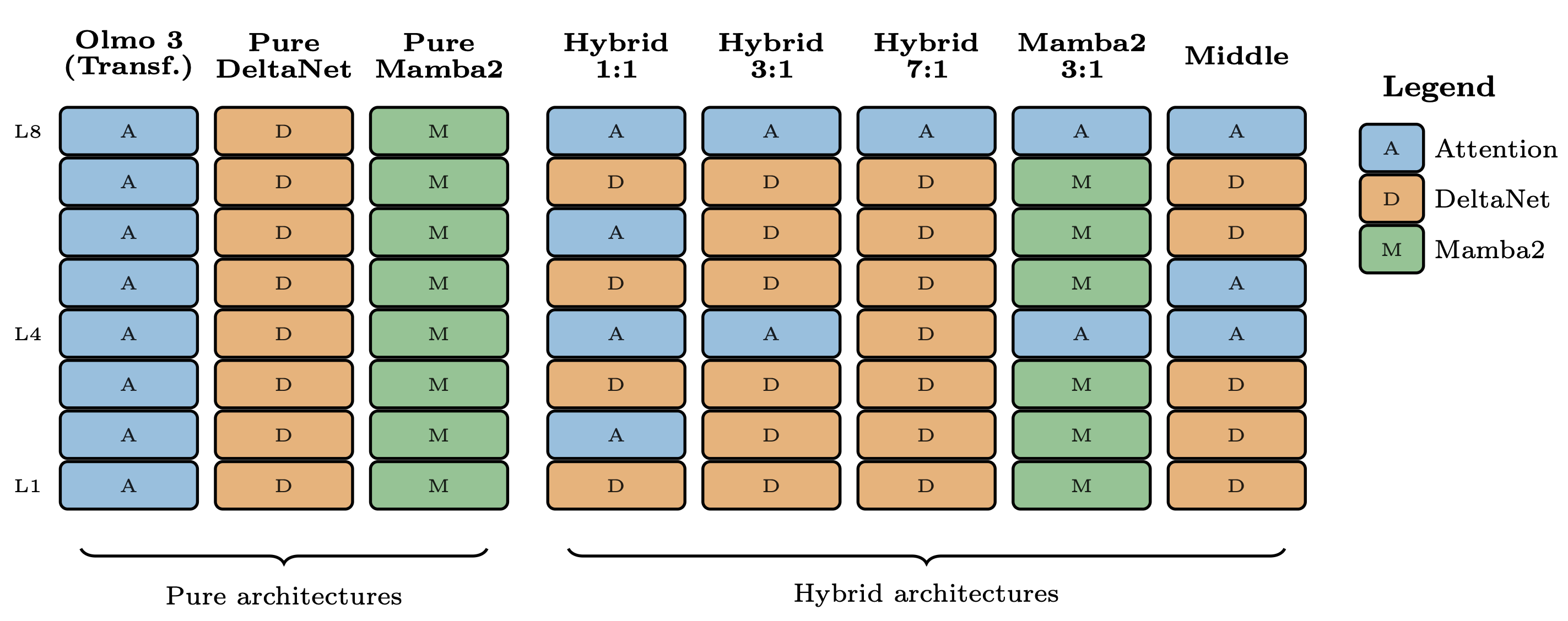

| 3/5/26 |  Olmo Hybrid and future LLM architectures✨ | hybrid architecturesopen-weight models+4 | — | Qwen 3.5Kimi Linear+9 | — | hybrid modelsRNN+6 | — | 11m 21s | |

| 2/24/26 |  How much does distillation really matter for Chinese LLMs?✨ | distillationAI+4 | — | Chinese labsAmerican API-based counterparts | — | distillationsynthetic data+4 | — | 11m 20s | |

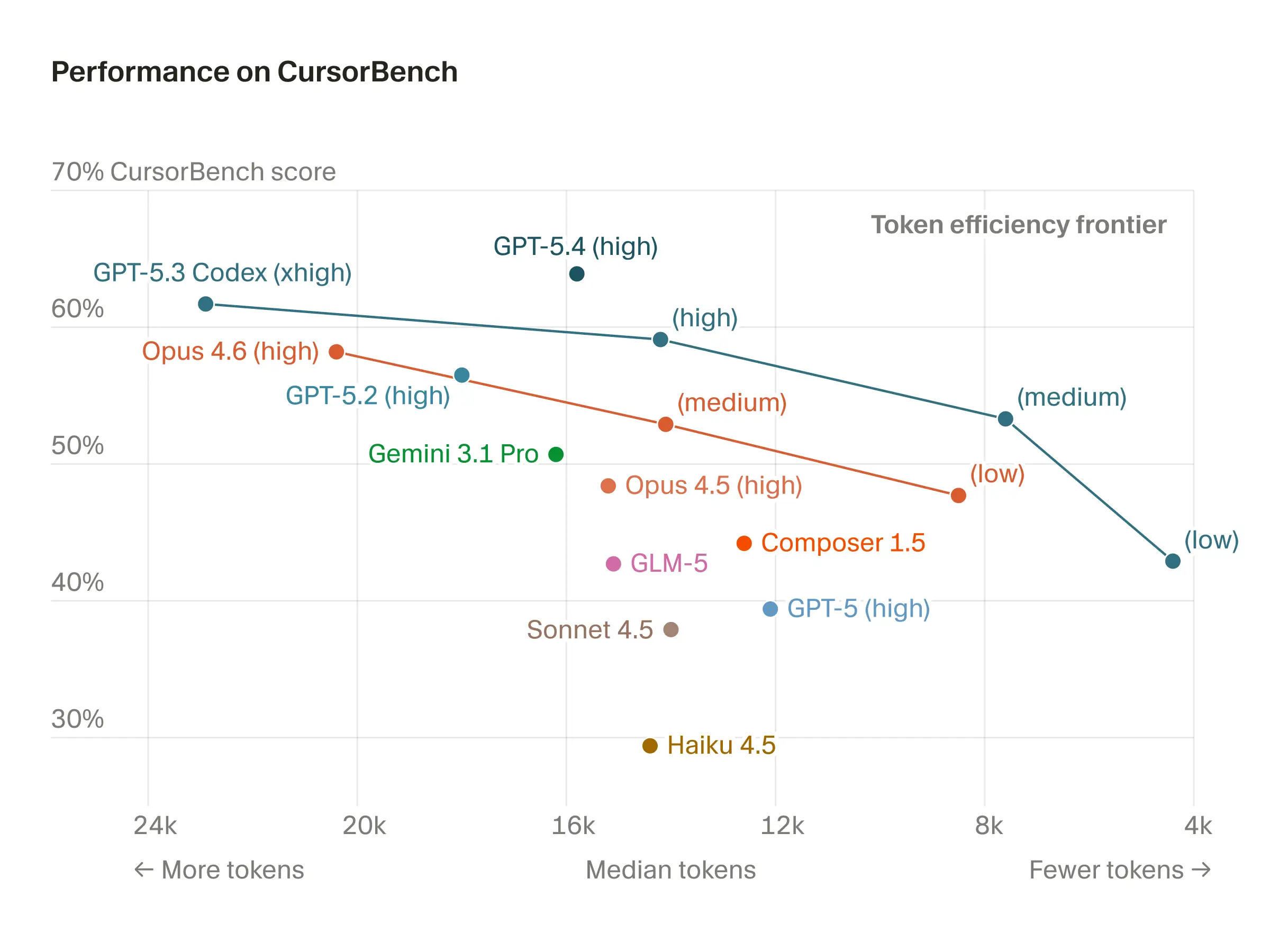

| 2/9/26 |  Opus 4.6, Codex 5.3, and the post-benchmark era | Last Thursday, February 5th, both OpenAI and Anthropic unveiled the next iterations of their models designed as coding assistants, GPT-5.3-Codex and Claude Opus 4.6, respectively. Ahead of this, Anthropic had a firm grasp of the mindshare as everyone collectively grappled with the new world of agents, primarily driven by a Claude Code with Opus 4.5-induced step change in performance. This post doesn’t unpack how software is changing forever, Moltbook is showcasing the future, ML research is accelerating, and the many broader implications, but rather how to assess, live with, and prepare for new models. The fine margins between Opus 4.6 and Codex 5.3 will be felt in many model versions this year, with Opus ahead in this matchup on usability.Going into these releases I’d been using Claude Code extensively as a general computer agent, with some software engineering and a lot of data analysis, automation, etc. I had dabbled with Codex 5.2 (usually on xhigh, maximum thinking effort), but found it not to quite work for me among my broad, horizontal set of tasks.For the last few days, I’ve been using both of the models much more evenly. I mean this as a great compliment, but Codex 5.3 feels much more Claude-like, where it’s much faster in its feedback and much more capable in a broad suite of tasks from git to data analysis (previous versions of Codex, including up to 5.2, regularly failed basic git operations like creating a fresh branch). Codex 5.3 takes a very important step towards Claude’s territory by having better product-market fit. This is a very important move for OpenAI and between the two models, Codex 5.3 feels far more different than its predecessors.OpenAI’s latest GPT, with this context, keeps an edge as a better coding model. It’s hard to describe this general statement precisely, and a lot of it is based on reading others’ work, but it seems to be a bit better at finding bugs and fixing things in codebases, such as the minimal algorithmic examples for my RLHF Book. In my experience, this is a minor edge, and the community thinks that this is most apparent in complex situations (i.e. not most vibe-coded apps). As users become better at supervising these new agents, having the best top-end ability in software understanding and creation could become a meaningful edge for Codex 5.3, but it is not an obvious advantage today. Many of my most trusted friends in the AI space swear by Codex because it can be just this tiny bit better. I haven’t been able to unlock it.Switching from Opus 4.6 to Codex 5.3 feels like I need to babysit the model in terms of more detailed descriptions when doing somewhat mundane tasks like “clean up this branch and push the PR.” I can trust Claude to understand the context of the fix and generally get it right, where Codex can skip files, put stuff in weird places, etc.Both of these releases feel like the companies pushing for capabilities and speed of execution in the models, but at the cost of some ease of use. I’ve found both Opus 4.6 and Codex 5.3 ignoring an instruction if I queue up multiple things to do — they’re really best when given well-scoped, clear problems (especially Codex). Claude Code’s harness has a terrible bug that makes subagents brick the terminal, where new messages say you must compact or clear, but compaction fails. Despite the massive step by Codex, they still have a large gap to close to Claude on the product side. Opus 4.6 is another step in the right direction, where Claude Code feels like a great experience. It’s approachable, it tends to work in the wide range of tasks I throw at it, and this’ll help them gain much broader adoption than Codex. If I’m going to recommend a coding agent to an audience who has limited-to-no software experience, it’s certainly going to be Claude. At a time when agents are just emerging into general use, this is a massive advantage, both in mindshare and feedback in terms of usage data.In the meantime, there’s no cut-and-dried guideline on which agent you need to use for any use-case, you need to use multiple models all the time and keep up with the skill that is managing agents. Interconnects AI is a reader-supported publication. Consider becoming a subscriber.Assessing models in 2026There have been many hints through 2025 that we were heading toward an AI world where benchmarks associated with model releases no longer convey meaningful signal to users. Back in the time of the GPT-4 or Gemini 2.5 Pro releases, the benchmark deltas could be easily felt within the chatbot form factor of the day — models were more reliable, could do more tasks, etc. This continued through models like OpenAI’s o3. During this phase of AI’s buildout, roughly from 2023 to 2025, we were assembling the core functionality of modern language models: tool-use, extended reasoning, basic scaling, etc. The gains were obvious.It should be clear with the releases of both Opus 4.6 and Codex 5.3 that benchmark-based release reactions barely matter. For this release, I barely looked at the evaluation scores. I saw that Opus 4.6 had a bit better search scores and Codex 5.3 used far fewer tokens per answer, but neither of these were going to make me sure they were much better models. Each of the AI laboratories, and the media ecosystems covering them, have been on this transition away from standard evaluations at their own pace. The most telling example is the Gemini 3 Pro release in November of 2025. The collective vibe was Google is back in the lead. Kevin Roose, self-proclaimed “AGI-pilled” NYTimes reporter in SF said:There's sort of this feeling that Google, which kind of struggled in AI for a couple of years there — they had the launch of Bard and the first versions of Gemini, which had some issues — and I think they were seen as sort of catching up to the state of the art. And now the question is: is this them taking their crown back?We don’t need to dwell on the depths of Gemini’s current crisis, but they have effectively no impact at the frontier of coding agents, which as an area feels the most likely for dramatic strides in performance — dare I say, even many commonly accepted definitions of AGI that center around the notion of a “remote worker?” The timeline has left them behind 2 months after their coronation, showing Gemini 3 was hailed as a false king.On the other end of the spectrum is Anthropic. With Anthropic’s release of Claude 4 in May of 2025, I was skeptical of their bet on code — I was distracted by the glitz of OpenAI and Gemini trading blows with announcements like models achieving IMO Gold medals in mathematics or other evaluation breakthroughs.Anthropic deserves serious credit for the focus of its vision. They were likely not the only AI lab to note the coming role of agents, but they were by far the first to shift their messaging and prioritization towards this. In my post in June of 2025, a month after Claude 4 was released, I was coming around to them being right to deprioritize standard benchmarks:This is a different path for the industry and will take a different form of messaging than we’re used to. More releases are going to look like Anthropic’s Claude 4, where the benchmark gains are minor and the real world gains are a big step. There are plenty of more implications for policy, evaluation, and transparency that come with this. It is going to take much more nuance to understand if the pace of progress is continuing, especially as critics of AI are going to seize the opportunity of evaluations flatlining to say that AI is no longer working.This leaves me reflecting on the role of Interconnects’ model reviews in 2026. 2025 was characterized by many dramatic, day-of model release blog posts, with the entry of many new Chinese open model builders, OpenAI’s first open language model since GPT-2, and of course the infinitely hyped GPT-5. These timely release posts still have great value — they center the conversation around the current snapshot of a company vis-a-vis the broader industry, but if models remain similar, they’ll do little to disentangle the complexity in mapping the current frontier of AI. In order to serve my role as an independent voice tracking the frontier models, I need to keep providing regular updates on how I’m using models, why, and why not. Over time, the industry is going to develop better ways of articulating the differences in agentic models. For the next few months, maybe even years, I expect the pace of progress to be so fast and uneven in agentic capabilities, that consistent testing and clear articulation will be the only way to monitor it. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit www.interconnects.ai/subscribe | 8m 09s | ||||||